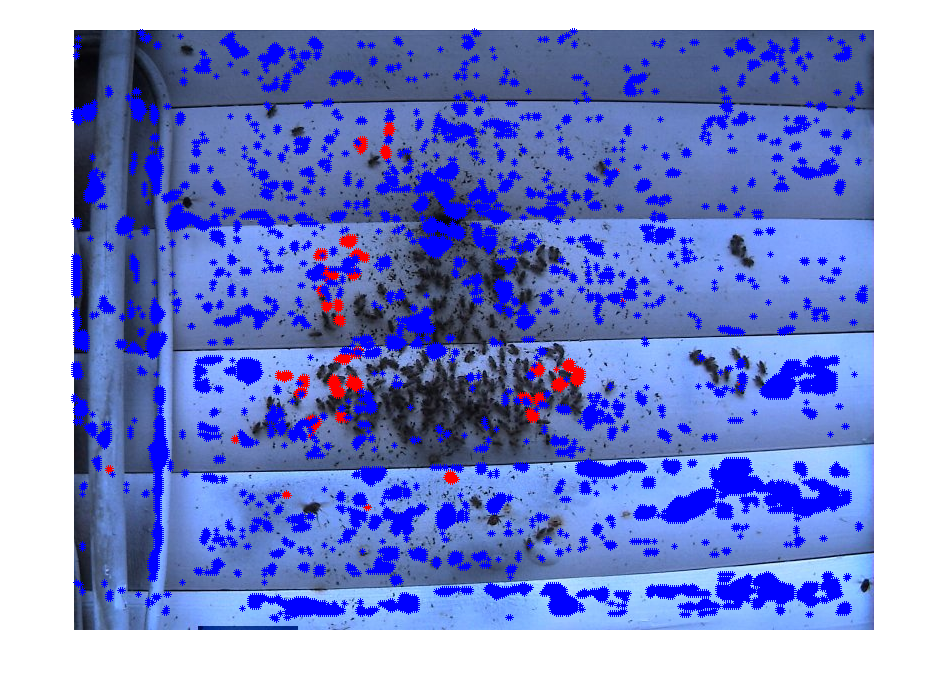

It looks good on the upper middle region. It was a big block of mud on the original image, but the index table just shows different texture than those bees.

UCSD CSE190a Haili Wang

It looks good on the upper middle region. It was a big block of mud on the original image, but the index table just shows different texture than those bees.